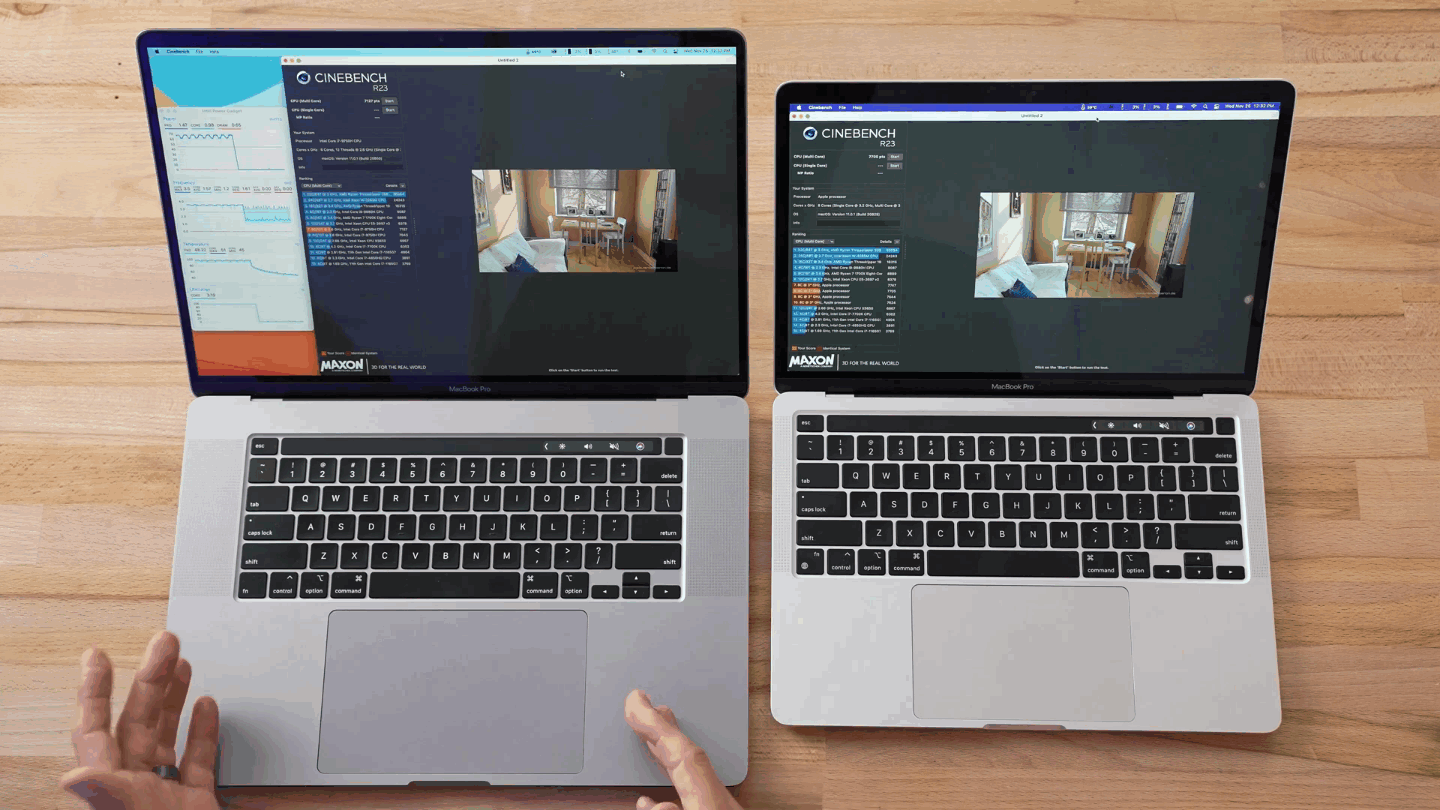

Image 2 - Benchmark results on a custom model (Colab: 87.8s Colab (augmentation): 286.8s M1 Pro: 71s M1 Pro (augmentation): 127.8s) (image by author) Keep in mind that two models were trained, one with and one without data augmentation:

We’ll now compare the average training time per epoch for both M1 Pro and Google Colab on the custom model architecture. Google Colab - Data Science Benchmark Results Print( f 'Duration: ')įinally, let’s see the results of the benchmarks. Conv2D(filters = 32, kernel_size =( 3, 3), activation = 'relu'), MaxPool2D(pool_size =( 2, 2), padding = 'same'), # USED ON A TEST WITH DATA AUGMENTATION train_datagen = tf. Data loading # USED ON A TEST WITHOUT DATA AUGMENTATION train_datagen = tf.

Use only a single pair of train_datagen and valid_datagen at a time: I’ve split this test into two parts - a model with and without data augmentation. Let’s go over the code used in the tests.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed